Our papers are the official record of our discoveries. They allow others to build on and apply our work. Each one is the result of many months of research, so we make a special effort to make our papers clear, inspiring and beautiful, and publish them in leading journals.

- Date

- Subject

- Theme

- Journal

- Citations

- Altmetric

- SNIP

- Author

High energy physics

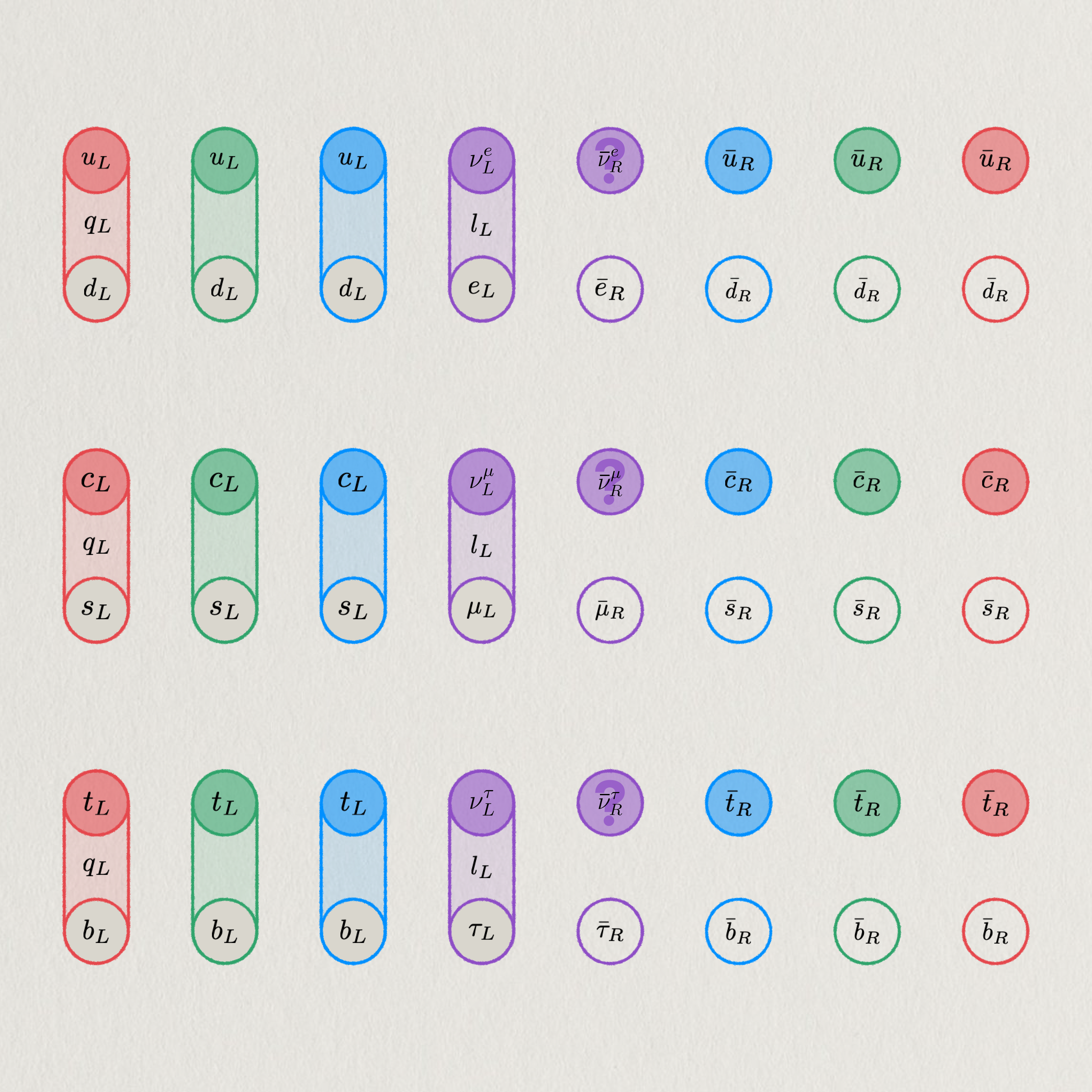

Three fermion families

A colour symmetry extension of baryon plus lepton symmetric gapped quantum topological order replaces families of massive sterile neutrinos.

High energy physics

Localisation in BV

Supersymmetric systems are classified via BV formalism, extending localisation and identifying when integrals reduce to critical points.

High energy physics

Gauss–Bonnet via BV

A new proof of the Chern–Gauss–Bonnet theorem is derived using supersymmetry and BV localisation, reducing geometry to critical points.

Quantum physics

Multi-path transfer

Simultaneous propagation along multiple paths speeds excitation transfer in long-range lattices, proving an evident quantum advantage.

High energy physics

Three-family puzzle

Extending family symmetry by colour cancels a Standard Model global anomaly, linking three colours to favour three fermion generations.

Statistical physics

An AI phase change

Weight pruning uncovers critical behaviour in deep neural networks with a sharp transition from functional cooperation to disordered failure.

Statistical physics

Deep learning simplicity

We give a theory for the output of deep-layered machines and show that, as the network depth increases, it is biased towards simple outputs.

AI-assisted maths

AI for error correction

Machine learning finds “champion” codes by predicting and optimising their minimum Hamming distance, a measure of a code’s robustness.

Integrable Systems

Exact hard-rod dynamics

A canonical quantum fluid model is solved exactly, revealing universal correlation patterns governed by Gaussian random-matrix ensembles.

High energy physics

Fermionic dark matter

Gravitational anomalies causing baryon and lepton number violation in the Standard Model are resolved using new fermionic topological orders.

Quantum physics

Towards optimal control

Time-optimal control of large quantum systems is computed efficiently by applying boundary conditions to a brachistochrone–Lax framework.

Machine learning

Boosting AI reasoning

By increasing the effective depth of neural networks, we improve their sequential reasoning abilities in tasks involving cellular automata.

Machine learning

Limits of attention

We demonstrate that transformer attention can only discriminate well at shorter context lengths, losing clarity as input length increases.

Representation theory

Braid representations

We demonstrate that the Lawrence–Krammer representation arises as a q-deformation of the symmetric square of the Burau representation.

High energy physics

Topological responses

Fractional conductivity between the nuclear and electromagnetic higher symmetries reveals four global Lie gauge groups of the Standard Model.

Algebraic geometry

Standard Model vacuum

The rich and intricate vacuum geometry of the Minimal Supersymmetric Standard Model—a complex manifold—is characterised for the first time.

Algebraic geometry

Analysing the vacuum

Birational methods in algebraic geometry are used to explicitly describe the vacuum structure of the Minimal Supersymmetric Standard Model.

Quantum physics

Fibonacci anyons

With IBM Quantum, we braid non-abelian Fibonacci anyons in string-net condensates to realise fault-tolerant universal quantum computation.

Representation theory

Group representations

A general approach to proving the irreducibility of representations of infinite-dimensional groups within the frame of Ismagilov's conjecture.

Statistical mechanics

On universal dynamics

Quantum many-body systems share patterns of dynamics that are exactly described by tridiagonal matrices based on continuous Hahn polynomials.

High energy physics

Reinforcing spectra

We show reinforcement learning can be used to check whether a certain class of quantum field theory has a finite spectrum of stable particles.

AI-assisted maths

Learning integrability

We introduce an AI-based framework for finding solutions to the Yang-Baxter equation and discover hundreds of new integrable Hamiltonians.

High energy physics

Topological dark matter

Sterile neutrinos are replaced by topological order as dark matter candidates to counterbalance the Standard Model’s gravitational anomalies.

Machine learning

Multitasking memory

The abilities and power of a type of transformer model with memory are greatly improved by learning several key tasks at once during training.

Computational biology

Adaptability speeds evo

Based on computer simulations, we argue developmental plasticity accelerates evolution and drives organisms towards ever-greater complexity.

Quantum physics

Regularising CRT

Charge conjugation C, space reflection R, and time-reversal T operators are regularised in a quantum many-body Hilbert space on a discrete lattice.

Condensed matter theory

An 8-fold way for CRT

Varying the spacetime dimensions fermions occupy shows charge-conjugation C, space-reflection R and time-reversal T symmetries are 8-fold periodic.

High energy physics

C, P and T in fractions

Charge-conjugation, space-parity and time-reversal symmetries are shown to form noncommutative groups, including the order-16 Pauli group.

Neurocomputing

Circuits with memory

We derive dynamical equations for networks with memristors and the Lyapunov functions of purely memristive circuits to study their stability.

Number theory

Common energies

A polynomial criterion is obtained for a set to have a small doubling, expressed in terms of the common additive energy of its subsets.

Quantum optimal control

Optimal transfer

We use the quantum brachistochrone method to design an optimal control strategy for the fastest quantum state transfer in long qubit chains.

Condensed matter theory

Topological boundary

We show that Weyl fermions and anomalous topological order in 4 dimensions can live on the edge of the same 5-dimensional superconductor.

Quantum optimal control

Three-qubit shortcut

We achieve maximal-fidelity state transfer in the fastest possible time for a 3-qubit chain by applying the quantum variational method.

Statistical mechanics

Fredholm meets Toeplitz

A new approach to the large distance asymptotic of the finite-temperature deformation is discussed for a sine-kernel Fredholm determinant.

AI-assisted maths

Metaheuristic tilings

We use simulated annealing to efficiently construct all brane tilings that encode supersymmetric gauge theories and discover a new one.

Algebraic geometry

Linearising actions

We give a solution of the linearisation problem in the Cremona group of rank two over an algebraically closed field of characteristic zero.

High energy physics

A new leptogenesis

We propose that dark matter consists of topological order, so gapped anyon excitations decay to generate the Standard Model's lepton asymmetry.

Quantum field theory

Categorical symmetry

We demonstrate that the Standard Model's baryon minus lepton symmetry defect can become categorical by absorbing the gravitational anomaly.

Representation theory

Irreducible group action

We construct the unitary representation of an infinite-dimensional general linear group acting on a space and establish its irreducibility.

String theory

Futaki for reflexives

We compute Futaki invariants for gauge theories from D3-branes that probe toric Calabi-Yau singularities arising from reflexive polytopes.

QUANTUM GASES

Vortex bound states

Modelling the behaviour of two interacting bosonic particles in a chiral, dimerized optical lattice shows the pair form a vortex bound state.

Quantum field theory

Continuous quivers

Continuous quivers enable exact Wilson loop calculation, reveal an emergent dimension, and raise tantalising questions on dual strings.

Quantum field theory

Peculiar betas tamed

Inconsistencies between two approaches to deriving beta functions in two-dimensional sigma models are resolved by adding heavy superpartners.

Algebraic geometry

Schön complete intersections

A uniform approach to a class of varieties is described that includes important types of objects from geometry, optimisation and physics.

Algebraic geometry

Slight degenerations

The tools used to study polynomial equations with indeterminate coefficients are extended to some important cases with interrelated ones.

Condensed matter theory

Nonreciprocal breather

Producing the first examples of breathing solitons in one-dimensional non-reciprocal media allows their propagation dynamics to be analysed.

AI-assisted maths

On AI-driven discovery

Reviewing progress in the field of AI-assisted discovery for maths and theoretical physics reveals a triumvirate of different approaches.

AI-assisted maths

Triangulating polytopes

Machine learning generates desirable triangulations of geometric objects that are required for Calabi-Yau compactification in string theory.

AI-assisted maths

Convolution in topology

Using Inception, a convolutional neural network, we predict certain divisibility invariants of Calabi-Yau manifolds with up to 90% accuracy.

AI-assisted maths

Learning to be simple

Neural networks classify simple finite groups by generators, unlike earlier methods using Cayley tables, leading to a proven explicit criterion.

Neurocomputing

Spiky backpropagation

The training algorithm for digital neural networks is adapted and implemented entirely on an experimental chip inspired by brain physiology.

Condensed matter theory

Counting free fermions

We link the statistical properties of one-dimensional systems of free fermions initialised in states of either half- or alternating-occupancy.

Condensed matter theory

A kicked polaron

Modelling the final state of a mobile impurity particle immersed in a one-dimensional quantum fluid after the abrupt application of a force.

Gravity

Brightening black holes

Classical Kerr amplitudes for rotating black holes are derived using insights from recent work in massive higher-spin quantum field theory.

Gravity

Spinning Root-Kerr

Two approaches that provide local formulae for Compton amplitudes of higher-spin massive objects in the quantum regime and classical limit.

Quantum field theory

PCM in arbitrary fields

The first exact solution for the vacuum state of an asymptotically free QFT in a general external field found for the Principal Chiral Model.

Algebraic geometry

Sparse singularities

Geometric properties, including delta invariants, are computed for singular points defined by polynomials with indeterminate coefficients.

Algebraic geometry

Permuting the roots

The Galois group of a typical rational function is described and similar problems solved using the topology of braids and tropical geometry.

AI-assisted maths

Clifford invariants by ML

Coxeter transformations for root diagrams of simply-laced Lie groups are exhaustively computed then machine learned to very high accuracy.

Condensed matter theory

Strange kinks

A new non-linear mechanical metamaterial can sustain topological solitons, robust solitary waves that could have exciting applications.

Machine learning

The limits of LLMs

Large language models like ChatGPT can generate human-like text but businesses that overestimate their abilities risk misusing the technology.

Number theory

Multiplicativity of sets

Expanding the known multiplicative properties of large difference sets yields a new, quantitative proof on the structure of product sets.

Linear algebra

Infinite parallelotope

We study the geometry of finite dimensional space as the dimension grows to infinity with an accent on the height of the parallelotope.

Combinatorics

The popularity gap

A cyclic group with small difference set has a nonzero element for which the second largest number of representations is twice the average.

Combinatorics

Quadratic residues

Additive combinatorics sheds light on the distribution of the set of squares in the prime field, revealing a new upper bound for the number of gaps.

Condensed matter theory

Mobile impurity

Explicit computation of injection and ejection impurity’s Green’s function reveals a generalisation of the Kubo-Martin-Schwinger relation.

AI-assisted maths

AI for cluster algebras

Investigating cluster algebras through the lens of modern data science reveals an elegant symmetry in the quiver exchange graph embedding.

Number theory

Counting recursive divisors

Three new closed-form expressions give the number of recursive divisors and ordered factorisations, which were until now hard to compute.

Algebraic geometry

Bundled Laplacians

By approximating the basis of eigenfunctions, we computationally determine the harmonic modes of bundle-valued Laplacians on Calabi-Yau manifolds.

Number theory

Recursive divisor properties

The recursive divisor function has a simple Dirichlet series that relates it to the divisor function and other standard arithmetic functions.

General relativity

Absorption with amplitudes

How gravitational waves are absorbed by a black hole is understood, for the first time, through effective on-shell scattering amplitudes.

Quantum field theory

Peculiar betas

The beta function for a class of sigma models is not found to be geometric, but rather has an elegant form in the context of algebraic data.

Machine learning

DeepPavlov dream

A new open-source platform is specifically tailored for developing complex dialogue systems, like generative conversational AI assistants.

AI-assisted maths

Learning 3-manifolds

3-manifolds represented as isomorphism signatures of their triangulations and associated Pachner graphs are analysed with machine learning.

AI-assisted maths

Computing Sasakians

Topological quantities for the Calabi-Yau link construction of G2 manifolds are computed and machine learnt with high performance scores.

Computational linguistics

Cross-lingual knowledge

Models trained on a Russian topical dataset, of knowledge-grounded human-human conversation, are capable of real-world tasks across languages.

Combinatorics

Representation for sum-product

A new way to estimate indices via representation theory reveals links to the sum-product phenomena and Zaremba’s conjecture in number theory.

Machine learning

Speaking DNA

A family of transformer-based DNA language models can interpret genomic sequences, opening new possibilities for complex biological research.

Algebraic geometry

Genetic polytopes

Genetic algorithms, which solve optimisation problems in a natural selection-inspired way, reveal previously unconstructed Calabi-Yau manifolds.

Condensed matter theory

Spin diffusion

The spin-spin correlation function of the Hubbard model reveals that finite temperature spin transport in one spatial dimension is diffusive.

Condensed matter theory

Spin-charge separation

A transformation for spin and charge degrees of freedom in one-dimensional lattice systems allows direct access to the dynamical correlations.

Statistical physics

Kauffman cracked

Surprisingly, the number of attractors in the critical Kauffman model with connectivity one grows exponentially with the size of the network.

Algebraic geometry

Analysing amoebae

Genetic symbolic regression methods reveal the relationship between amoebae from tropical geometry and the Mahler measure from number theory.

Combinatorics

Ungrouped machines

A new connection between continued fractions and the Bourgain–Gamburd machine reveals a girth-free variant of this widely-celebrated theorem.

Complex systems

Complex digital cities

A complexity-science approach to digital twins of cities views them as self-organising phenomena, instead of machines or logistic systems.

Group theory

On John McKay

This obituary celebrates the life and work of John Keith Stuart McKay, highlighting the mathematical miracles for which he will be remembered.

Machine learning

BERT enhanced with recurrence

The quadratic complexity of attention in transformers is tackled by combining token-based memory and segment-level recurrence, using RMT.

AI-assisted maths

Free energy and learning

Using the free energy principle to derive multiple theories of associative learning allows us to combine them into a single, unifying framework.

Number theory

Higher energies

Generalising the recent Kelley–Meka result on sets avoiding arithmetic progressions of length three leads to developments in the theory of the higher energies.

Combinatorics

In life, there are few rules

The bipartite nature of regulatory networks means gene-gene logics are composed, which severely restricts which ones can show up in life.

Number theory

Random Chowla conjecture

The distribution of partial sums of a Steinhaus random multiplicative function, of polynomials in a given form, converges to the standard complex Gaussian.

Algebraic geometry

Symmetric spatial curves

We study the geometry of generic spatial curves with a symmetry in order to understand the Galois group of a family of sparse polynomials.

Statistical physics

Landau meets Kauffman

Insights from number theory suggest a new way to solve the critical Kauffman model, giving new bounds on the number and length of attractors.

AI-assisted maths

AI for arithmetic curves

AI can predict invariants of low genus arithmetic curves, including those key to the Birch-Swinnerton-Dyer conjecture—a millennium prize problem.

Statistical physics

Multiplicative loops

The dynamics of the Kauffman network can be expressed as a product of the dynamics of its disjoint loops, revealing a new algebraic structure.

Synthetic biology

Cell soup in screens

Bursting cells can introduce noise in transcription factor screens, but modelling this process allows us to discern true counts from false.

Gravity

Black hole symmetry

Effective field theories for Kerr black holes, showing the 3-point Kerr amplitudes are uniquely predicted using higher-spin gauge symmetry.

Statistical physics

Network renormalization

Applying diffusion-based graph operators to complex networks identifies the proper spatiotemporal scales by overcoming small-world effects.

AI-assisted maths

Clustered cluster algebras

Cluster variables in Grassmannian cluster algebras can be classified with HPC by applying the tableaux method up to a fixed number of columns.

Number theory

Elliptical murmurations

Certain properties of the bivariate cubic equations used to prove Fermat’s last theorem exhibit flocking patterns, machine learning reveals.

Number theory

Bounding Zaremba’s conjecture

Using methods related to the Bourgain–Gamburd machine refines the previous bound on Zaremba’s conjecture in the theory of continued fractions.

String theory

World in a grain of sand

An AI algorithm of few-shot learning finds that the vast string landscape could be reduced by only seeing a tiny fraction to predict the rest.

Neurocomputing

Optimal electronic reservoirs

Balancing memory from linear components with nonlinearities from memristors optimises the computational capacity of electronic reservoirs.

String theory

Gauge theory and integrability

The algebra of a toric quiver gauge theory recovers the Bethe ansatz, revealing the relation between gauge theories and integrable systems.

Evolvability

Flowers of immortality

The eigenvalues of the mortality equation fall into two classes—the flower and the stem—but only the stem eigenvalues control the dynamics.

Combinatorics

Structure of genetic computation

The structural and functional building blocks of gene regulatory networks correspond, which tell us how genetic computation is organised.

Gravity

AI classifies space-time

A neural network learns to classify different types of spacetime in general relativity according to their algebraic Petrov classification.

String theory

Algebra of melting crystals

Certain states in quantum field theories are described by the geometry and algebra of melting crystals via properties of partition functions.

Combinatorics

Set additivity and growth

The additive dimension of a set, which is the size of a maximal dissociated subset, is closely connected to the rapid growth of higher sumsets.

AI-assisted maths

Machine learning Hilbert series

Neural networks find efficient ways to compute the Hilbert series, an important counting function in algebraic geometry and gauge theory.

AI-assisted maths

Line bundle connections

Neural networks find numerical solutions to Hermitian Yang-Mills equations, a difficult system of PDEs crucial to mathematics and physics.

AI-assisted maths

Calabi-Yau anomalies

Unsupervised machine-learning of the Hodge numbers of Calabi-Yau hypersurfaces detects new patterns with an unexpected linear dependence.

String theory

Mahler measure for quivers

Mahler measure from number theory is used for the first time in physics, yielding “Mahler flow” which extrapolates different phases in QFT.

Number theory

Recursively divisible numbers

Recursively divisible numbers are a new kind of number that are highly divisible, whose quotients are highly divisible, and so on, recursively.

AI-assisted maths

Learning the Sato–Tate conjecture

Machine-learning methods can distinguish between Sato-Tate groups, promoting a data-driven approach for problems involving Euler factors.

Machine learning

Universes as big data

Machine-learning is a powerful tool for sifting through the landscape of possible Universes that could derive from Calabi-Yau manifolds.

Network theory

True scale-free networks

The underlying scale invariance properties of naturally occurring networks are often clouded by finite-size effects due to the sample data.

Number theory

Reflexions on Mahler

Using Newton polynomials from reflexive polygons, we find that the Mahler measure and dessin d’enfants are in one-to-one correspondence.

Graph theory

Transitions in loopy graphs

The generation of large graphs with a controllable number of short loops paves the way for building more realistic random networks.

Statistical physics

Coexistence in diverse ecosystems

Scale-invariant plant clusters explain the ability for a diverse range of plant species to coexist in ecosystems such as Barra Colorado.

Neural networks

Quick quantum neural nets

The notion of quantum superposition speeds up the training process for binary neural networks and ensures that their parameters are optimal.

Quantum physics

Going, going, gone

A solution to the information paradox uses standard quantum field theory to show that black holes can evaporate in a predictable way.

Mathematical medicine

Tumour infiltration

A delicate balance between white blood cell protein expression and the molecules on the surface of tumour cells determines cancer prognoses.

Neurocomputing

Breaking classical barriers

Circuits of memristors, resistors with memory, can exhibit instabilities which allow classical tunnelling through potential energy barriers.

String theory

QFT and kids’ drawings

Groethendieck's “children’s drawings”, a type of bipartite graph, link number theory, geometry, and the physics of conformal field theory.

Machine learning

Neurons on amoebae

Machine-learning 2-dimensional amoeba in algebraic geometry and string theory is able to recover the complicated conditions from so-called lopsidedness.

Group theory

New approaches to the Monster

Editorial of the last set of lectures given by the founder, McKay, of Moonshine Conjectures, the proof of which got Borcherds the Fields Medal.

Combinatorics

Combinatoric topological strings

We find a physical interpretation, in terms of combinatorial topological string theory, of a classic result in finite group theory theory.

Network theory

Physics of financial networks

Statistical physics contributes to new models and metrics for the study of financial network structure, dynamics, stability and instability.

Economic complexity

Channels of contagion

Fire sales of common asset holdings can whip through a channel of contagion between banks, insurance companies and investments funds.

Financial risk

Risky bank interactions

Networks where risky banks are mostly exposed to other risky banks have higher levels of systemic risk than those with stable bank interactions.

Mathematical medicine

Cancer and coronavirus

Cancer patients who contract and recover from Coronavirus-2 exhibit long-term immune system weaknesses, depending on the type of cancer.

Particle physics

Scale of non-locality

The number of particles in a higher derivative theory of gravity relates to its effective mass scale, which signals the theory’s viability.

Evolvability

I want to be forever young

The mortality equation governs the dynamics of an evolving population with a given maximum age, offering a theory for programmed ageing.

Inference

Exact linear regression

Exact methods supersede approximations used in high-dimensional linear regression to find correlations in statistical physics problems.

Number theory

Energy bounds for roots

Bounds for additive energies of modular roots can be generalised and improved with tools from additive combinatorics and algebraic number theory.

Number theory

Ample and pristine numbers

Parallels between the perfect and abundant numbers and their recursive analogs point to deeper structure in the recursive divisor function.

Financial markets

Network valuation in finance

Consistent valuation of interbank claims within an interconnected financial system can be found with a recursive update of banks' equities.

Neural networks

Deep layered machines

The ability of deep neural networks to generalize can be unraveled using path integral methods to compute their typical Boolean functions.

Statistical physics

Replica analysis of overfitting

Statistical methods that normally fail for very high-dimensional data can be rescued via mathematical tools from statistical physics.

Theory of innovation

Taming complexity

Insights from biology, physics and business shed light on the nature and costs of complexity and how to manage it in business organizations.

Inference, Statistical physics

Replica clustering

We optimize Bayesian data clustering by mapping the problem to the statistical physics of a gas and calculating the lowest entropy state.

Theory of innovation

Recursive structure of innovation

A theoretical model of recursive innovation suggests that new technologies are recursively built up from new combinations of existing ones.

Network theory

Bursting dynamic networks

A mathematical model captures the temporal and steady state behaviour of networks whose two sets of nodes either generate or destroy links.

Economic complexity

Renewable resource management

Modern portfolio theory inspires a strategy for allocating renewable energy sources which minimises the impact of production fluctuations.

Thermodynamics

Energy harvesting with AI

Machine learning techniques enhance the efficiency of energy harvesters by implementing reversible energy-conserving operations.

Geometry

Geometry of discrete space

A phase transition creates the geometry of the continuum from discrete space, but it needs disorder if it is to have the right metric.

Neurocomputing

Memristive networks

A simple solvable model of memristive networks suggests a correspondence between the asymptotic states of memristors and the Ising model.

Statistical physics

Physics of networks

Statistical physics harnesses links between maximum entropy and information theory to capture null model and real-world network features.

Theory of innovation

The rate of innovation

The distribution of product complexity helps explain why some technology sectors tend to exhibit faster innovation rates than other sectors.

Thermodynamics

One-shot statistic

One-shot analogs of fluctuation-theorem results help unify these two approaches for small-scale, nonequilibrium statistical physics.

Complex networks

Information asymmetry

Network users who have access to the network’s most informative node, as quantified by a novel index, the InfoRank, have a competitive edge.

Quantum physics

A Hamiltonian recipe

An explicit recipe for defining the Hamiltonian in general probabilistic theories, which have the potential to generalise quantum theory.

Neurocomputing

Solvable memristive circuits

Exact solutions for the dynamics of interacting memristors predict whether they relax to higher or lower resistance states given random initialisations.

Inference

Grain shape inference

The distributions of size and shape of a material’s grains can be constructed from a 2D slice of the material and electron diffraction data.

Ignoble research

Volunteer clouds

A novel approach to volunteer clouds outperforms traditional distributed task scheduling algorithms in the presence of intensive workloads.

Financial networks

From ecology to finance

Bipartite networks model the structures of ecological and economic real-world systems, enabling hypothesis testing and crisis forecasting.

Graph theory

Hypercube eigenvalues

Hamming balls, subgraphs of the hypercube, maximise the graph’s largest eigenvalue exactly when the dimension of the cube is large enough.

Technological progress

Forecasting technology deployment

Forecast errors for simple experience curve models facilitate more reliable estimates for the costs of technology deployment.

Financial networks

Hierarchies in directed networks

An iterative version of a method to identify hierarchies and rankings of nodes in directed networks can partly overcome its resolution limit.

Graph theory

Exactly solvable random graphs

An explicit analytical solution reproduces the main features of random graph ensembles with many short cycles under strict degree constraints.

Financial networks

The interbank network

The large-scale structure of the interbank network changes drastically in times of crisis due to the effect of measures from central banks.

Theory of innovation

The science of strategy

The usefulness of components and the complexity of products inform the best strategy for innovation at different stages of the process.

Theory of materials

Dirac cones in 2D borane

The structure of two-dimensional borane, a new semi-metallic single-layered material, has two Dirac cones that meet right at the Fermi energy.

Financial risk

Modelling financial systemic risk

Complex networks model the links between financial institutions and how these channels can transition from diversifying to propagating risk.

Mathematical medicine

Bayesian analysis of medical data

Bayesian networks describe the evolution of orthodontic features on patients receiving treatment versus no treatment for malocclusion.

Theory of innovation

The secret structure of innovation

Firms can harness the shifting importance of component building blocks to build better products and services and hence increase their chances of sustained success.

Neural networks

Quantum neural networks

We generalise neural networks into a quantum framework, demonstrating the possibility of quantum auto-encoders and teleportation.

Network theory

Debunking in a world of tribes

When people operate in echo chambers, they focus on information adhering to their system of beliefs. Debunking them is harder than it seems.

Neurocomputing

Memristive networks and learning

Memristive networks preserve memory and have the ability to learn according to analysis of the network’s internal memory dynamics.

Neurocomputing

Dynamics of memristors

Exact equations of motion provide an analytical description of the evolution and relaxation properties of complex memristive circuits.

Financial networks

Bipartite trade network

A new algorithm unveils complicated structures in the bipartite mapping between countries and products of the international trade network.

Sphere packing

3d grains from 2d slices

Moment-based methods provide a simple way to describe a population of spherical particles and extract 3d information from 2d measurements.

Complex systems

Disentangling links in networks

Inference from single snapshots of temporal networks can misleadingly group communities if the links between snapshots are correlated.

Thermodynamics

Quantum jumps in thermodynamics

Spectroscopy experiments show that energy shifts due to photon emission from individual molecules satisfy a fundamental quantum relation.

Financial markets

Financial network reconstruction

Statistical mechanics concepts reconstruct connections between financial institutions and the stock market, despite limited data disclosure.

Financial risk

Pathways towards instability

Processes believed to stabilize financial markets can drive them towards instability by creating cyclical structures that amplify distress.

Thermodynamics

Worst-case work entropic equality

A new equality which depends on the maximum entropy describes the worst-case amount of work done by finite-dimensional quantum systems.

Theory of innovation

Serendipity and strategy

In systems of innovation, the relative usefulness of different components changes as the number of components we possess increases.

Graph theory

Spectral partitioning

The spectral density of graph ensembles provides an exact solution to the graph partitioning problem and helps detect community structure.

Complex networks, Financial risk

Non-linear distress propagation

Non-linear models of distress propagation in financial networks characterise key regimes where shocks are either amplified or suppressed.

Financial risk

Immunisation of systemic risk

Targeted immunisation policies limit distress propagation and prevent system-wide crises in financial networks according to sandpile models.

Complex networks

Optimal growth rates

An extension of the Kelly criterion maximises the growth rate of multiplicative stochastic processes when limited resources are available.

Quantum computing

Tunnelling interpreted

Quantum tunnelling only occurs if either the Wigner function is negative, or the tunnelling rate operator has a negative Wigner function.

Financial risk

The price of complexity

Increasing the complexity of the network of contracts between financial institutions decreases the accuracy of estimating systemic risk.

Thermodynamics

Photonic Maxwell's demon

With inspiration from Maxwell’s classic thought experiment, it is possible to extract macroscopic work from microscopic measurements of photons.

Graph theory

Eigenvalues of neutral networks

The principal eigenvalue of small neutral networks determines their robustness, and is bounded by the logarithm of the number of vertices.

Network theory

Cascades in flow networks

Coupled distribution grids are more vulnerable to a cascading systemic failure but they have larger safe regions within their networks.

Percolation theory

Self-organising adaptive networks

An adaptive network of oscillators in fragmented and incoherent states can re-organise itself into connected and synchronized states.

Thermodynamics

Optimal heat exchange networks

Compact heat exchangers can be designed to run at low power if the exchange is concentrated in a crumpled surface fed by a fractal network.

Financial markets

Instability in complex ecosystems

The community matrix of a complex ecosystem captures the population dynamics of interacting species and transitions to unstable abundances.

Discrete dynamics

Form and function in gene networks

The structural properties of a network motif predict its functional versatility and relate to gene regulatory networks.

Percolation theory

Clusters of neurons

Percolation theory shows that the formation of giant clusters of neurons relies on a few parameters that could be measured experimentally.

Gravity

Cyclic isotropic cosmologies

In an infinitely bouncing Universe, the scalar field driving the cosmological expansion and contraction carries information between phases.

Technological progress

Predicting technological progress

A formulation of Moore’s law estimates the probability that a given technology will outperform another at a certain point in the future.

Percolation theory

Bootstrap percolation models

A subset of bootstrap percolation models, which stabilise systems of cells on infinite lattices, exhibit non-trivial phase transitions.

Financial markets

News sentiment and price dynamics

News sentiment analysis and web browsing data are unilluminating alone, but inspected together, predict fluctuations in stock prices.

Network theory

Communities in networks

A new tool derived from information theory quantitatively identifies trees, hierarchies and community structures within complex networks.

Financial markets

Effect of Twitter on stock prices

When the number of tweets about an event peaks, the sentiment of those tweets correlates strongly with abnormal stock market returns.

Complex networks

Democracy in networks

Analysis of the hyperbolicity of real-world networks distinguishes between those which are aristocratic and those which are democratic.

Biological networks

Protein interaction experiments

Properties of protein interaction networks test the reliability of data and hint at the underlying mechanism with which proteins recruit each other.

Graph theory

Erdős-Ko-Rado theorem analogue

A random analogue of the Erdős-Ko-Rado theorem sheds light on its stability in an area of parameter space which has not yet been explored.

Complex networks

Collective attention to politics

Tweet volume is a good indicator of political parties' success in elections when considered over an optimal time window so as to minimise noise.

Thermodynamics

A measure of majorization

Single-shot information theory inspires a new formulation of statistical mechanics which measures the optimal guaranteed work of a system.

Financial risk

DebtRank and shock propagation

A dynamical microscopic theory of instability for financial networks reformulates the DebtRank algorithm in terms of basic accounting principles.

Statistical physics

Spin systems on Bethe lattices

Exact equations for the thermodynamic quantities of lattices made of d-dimensional hypercubes are obtainable with the Bethe-Peierls approach.

Financial risk, Network theory

Fragility of the interbank network

The speed of a financial crisis outbreak sets the maximum delay before intervention by central authorities is no longer effective.

Theory of materials

Structure and stability of salts

The stable structures of calcium and magnesium carbonate at high pressures are crucial for understanding the Earth's deep carbon cycle.

Economic complexity

Dynamics of economic complexity

Dynamical systems theory predicts the growth potential of countries with heterogeneous patterns of evolution where regression methods fail.

Neurocomputing

From memory to scale-free

A local model of preferential attachment with short-term memory generates scale-free networks, which can be readily computed by memristors.

Percolation theory

Maximum percolation time

A simple formula gives the maximum time for an n x n grid to become entirely infected having undergone a bootstrap percolation process.

Graph theory

Random graphs with short loops

The analysis of real networks which contain many short loops requires novel methods, because they break the assumptions of tree-like models.

Economic complexity

Taxonomy and economic growth

Less developed countries have to learn simple capabilities in order to start a stable industrialization and development process.

Sphere packing

Viscosity of polydisperse spheres

A fast and simple way to measure how polydisperse spheres crowd around each other, termed the packing fraction, agrees well with rheological data.

Financial risk

Networks of credit default swaps

Time series data from networks of credit default swaps display no early warnings of financial crises without additional macroeconomic indicators.

Graph theory

Entropies of graph ensembles

Explicit formulae for the Shannon entropies of random graph ensembles provide measures to compare and reproduce their topological features.

Network theory

Easily repairable networks

When networks come under attack, a repairable architecture is superior to, and globally distinct from, an architecture that is robust.

Quantum thermodynamics

Entanglement typicality

A review of the achievements concerning typical bipartite entanglement for random quantum states involving a large number of particles.

Theory of materials

Predicting interface structures

Generating random structures in the vicinity of a material’s defect predicts the low and high energy atomic structure at the grain boundary.

Percolation theory

Percolation on Galton-Watson trees

The critical probability for bootstrap percolation, a process which mimics the spread of an infection in a graph, is bounded for Galton-Watson trees.

Financial risk

Memory effects in stock dynamics

The likelihood of stock prices bouncing on specific values increases due to memory effects in the time series data of the price dynamics.

Network theory

Self-healing complex networks

The interplay between redundancies and smart reconfiguration protocols can improve the resilience of networked infrastructures to failures.

Fractals

Structural imperfections

Fractal structures need very little mass to support a load; but for current designs, this makes them vulnerable to manufacturing errors.

Financial risk

Default cascades in networks

The optimal architecture of a financial system is only dependent on its topology when the market is illiquid, and no topology is always superior.

Sphere packing

Random close packing fractions

Lognormal distributions (and mixtures of same) are a useful model for the size distribution in emulsions and sediments.

Biological networks

Multitasking immune networks

The immune system must simultaneously recall multiple defense strategies because many antigens can attack the host at the same time.

Economic complexity

Metrics for global competitiveness

A new non-monetary metric captures diversification, a dominant effect on the globalised market, and the effective complexity of products.

Economic complexity

Measuring the intangibles

Coupled non-linear maps extract information about the competitiveness of countries to the complexity of their products from trade data.

Network theory

The temperature of networks

A new concept, graph temperature, enables the prediction of distinct topological properties of real-world networks simultaneously.

Network theory

Scales in weighted networks

Information theory fixes weighted networks’ degeneracy issues with a generalisation of binary graphs and an optimal scale of link intensities.

Mathematical medicine

Multi-tasking in immune networks

Associative networks with different loads model the ability of the immune system to respond simultaneously to multiple distinct antigen invasions.

Fractals

Gentle loads

The most efficient load-bearing fractals are designed as big structures under gentle loads, a common situation in aerospace applications.

Financial networks

Interbank controllability

Complex networks detect the driver institutions of an interbank market and ascertain that intervention policies should be time-scale dependent.

Financial networks

Reconstructing credit

New mathematical tools can help infer financial networks from partial data to understand the propagation of distress through the network.

Financial networks

Complex derivatives

Network-based metrics to assess systemic risk and the importance of financial institutions can help tame the financial derivatives market.

Technological progress

Organized knowledge economies

The Yule-Simon distribution describes the diffusion of knowledge and ideas in a social network which in turn influences economic growth.

Financial risk

Bootstrapping topology and risk

Information about 10% of the links in a complex network is sufficient to reconstruct its main features and resilience with the fitness model.

Network theory

Weighted network evolution

A statistical procedure identifies dominant edges within weighted networks to determine whether a network has reached its steady state.

Fractals

Ultralight fractal structures

The transition from solid to hollow beams changes the scaling of stability versus loading analogously to increasing the hierarchical order by one.

Fractals

Hierarchical space frames

A systematic way to vary the power-law scaling relations between loading parameters and volume of material aids the hierarchical design process.

Economic complexity

Network analysis of export flows

Network theory finds unexpected interactions between the number of products a country produces and the number of countries producing each product.

Economic complexity

Metric for fitness and complexity

A quantitative assessment of the non-monetary advantage of diversification represents a country’s hidden potential for development and growth.

Mathematical medicine

Networks for medical data

Network analysis of diagnostic data identifies combinations of the key factors which cause Class III malocclusion and how they evolve over time.

Financial markets

Search queries predict stocks

Analysis of web search queries about a given stock, from the seemingly uncoordinated activity of many users, can anticipate the trading peak.

Graph theory

Unbiased randomization

Unbiased randomisation processes generate sophisticated synthetic networks for modelling and testing the properties of real-world networks.

Network theory

Robust and assortative

Spectral analysis shows that disassortative networks exhibit a higher epidemiological threshold and are therefore easier to immunize.

Network theory

Clustering inverted

Edge multiplicity—the number of triangles attached to edges—is a powerful analytic tool to understand and generalize network properties.

Biological networks

What you see is not what you get

Methods from tailored random graph theory reveal the relation between true biological networks and the often-biased samples taken from them.

Ignoble research

Shear elastic deformation in cells

Analysis of the linear elastic behaviour of plant cell dispersions improves our understanding of how to stabilise and texturise food products.

Statistical physics

Dynamics of Ising chains

A transfer operator formalism solves the macroscopic dynamics of disordered Ising chain systems which are relevant for ageing phenomena.

Theory of materials

Diffusional liquid-phase sintering

A Monte Carlo model simulates the microstructural evolution of metallic and ceramic powders during the consolidation process liquid-phase sintering.

Graph theory

Tailored random graph ensembles

New mathematical tools quantify the topological structure of large directed networks which describe how genes interact within a cell.

Information theory

Assessing self-assembly

The information needed to self-assemble a structure quantifies its modularity and explains the prevalence of certain structures over others.

Sphere packing

Ever-shrinking spheres

Techniques from random sphere packing predict the dimension of the Apollonian gasket, a fractal made up of non-overlapping hyperspheres.

Discrete dynamics

Random cellular automata

Of the 256 elementary cellular automata, 28 of them exhibit random behavior over time, but spatio-temporal currents still lurk underneath.

Statistical physics

Single elimination competition

In single elimination competition the best indicator of success is a player's wealth: the accumulated wealth of all defeated players.