Our papers are the official record of our discoveries. They allow others to build on and apply our work. Each one is the result of many months of research, so we make a special effort to make our papers clear, inspiring and beautiful, and publish them in leading journals.

- Date

- Subject

- Theme

- Journal

- Citations

- Altmetric

- SNIP

- Author

A. Stepanenko

A. Stepanenko J. Wang

J. Wang Y. He

Y. He G. Caldarelli

G. Caldarelli T. Fink

T. Fink O. Gamayun

O. Gamayun M. Burtsev

M. Burtsev A. V. Kosyak

A. V. Kosyak E. Sobko

E. Sobko F. Sheldon

F. Sheldon F. Caravelli

F. Caravelli I. Shkredov

I. Shkredov A. Sarikyan

A. Sarikyan A. Esterov

A. Esterov A. Ochirov

A. Ochirov M. Reeves

M. Reeves R. Hannam

R. Hannam A. Coolen

A. Coolen O. Dahlsten

O. Dahlsten A. Mozeika

A. Mozeika M. Bardoscia

M. Bardoscia P. Barucca

P. Barucca M. Rowley

M. Rowley I. Teimouri

I. Teimouri F. Antenucci

F. Antenucci A. Scala

A. Scala R. Farr

R. Farr A. Zegarac

A. Zegarac S. Sebastio

S. Sebastio B. Bollobás

B. Bollobás F. Lafond

F. Lafond D. Farmer

D. Farmer C. Pickard

C. Pickard T. Reeves

T. Reeves J. Blundell

J. Blundell A. Gallagher

A. Gallagher M. Przykucki

M. Przykucki P. Smith

P. Smith L. Pietronero

L. Pietronero

Statistical physics

An AI phase change

Weight pruning uncovers critical behaviour in deep neural networks with a sharp transition from functional cooperation to disordered failure.

AI-assisted maths

AI for error correction

Machine learning finds “champion” codes by predicting and optimising their minimum Hamming distance, a measure of a code’s robustness.

Algebraic geometry

Standard Model vacuum

The rich and intricate vacuum geometry of the Minimal Supersymmetric Standard Model—a complex manifold—is characterised for the first time.

Algebraic geometry

Analysing the vacuum

Birational methods in algebraic geometry are used to explicitly describe the vacuum structure of the Minimal Supersymmetric Standard Model.

High energy physics

Reinforcing spectra

We show reinforcement learning can be used to check whether a certain class of quantum field theory has a finite spectrum of stable particles.

AI-assisted maths

Metaheuristic tilings

We use simulated annealing to efficiently construct all brane tilings that encode supersymmetric gauge theories and discover a new one.

String theory

Futaki for reflexives

We compute Futaki invariants for gauge theories from D3-branes that probe toric Calabi-Yau singularities arising from reflexive polytopes.

AI-assisted maths

On AI-driven discovery

Reviewing progress in the field of AI-assisted discovery for maths and theoretical physics reveals a triumvirate of different approaches.

AI-assisted maths

Triangulating polytopes

Machine learning generates desirable triangulations of geometric objects that are required for Calabi-Yau compactification in string theory.

AI-assisted maths

Convolution in topology

Using Inception, a convolutional neural network, we predict certain divisibility invariants of Calabi-Yau manifolds with up to 90% accuracy.

AI-assisted maths

Learning to be simple

Neural networks classify simple finite groups by generators, unlike earlier methods using Cayley tables, leading to a proven explicit criterion.

AI-assisted maths

Clifford invariants by ML

Coxeter transformations for root diagrams of simply-laced Lie groups are exhaustively computed then machine learned to very high accuracy.

AI-assisted maths

AI for cluster algebras

Investigating cluster algebras through the lens of modern data science reveals an elegant symmetry in the quiver exchange graph embedding.

Algebraic geometry

Bundled Laplacians

By approximating the basis of eigenfunctions, we computationally determine the harmonic modes of bundle-valued Laplacians on Calabi-Yau manifolds.

AI-assisted maths

Learning 3-manifolds

3-manifolds represented as isomorphism signatures of their triangulations and associated Pachner graphs are analysed with machine learning.

AI-assisted maths

Computing Sasakians

Topological quantities for the Calabi-Yau link construction of G2 manifolds are computed and machine learnt with high performance scores.

Algebraic geometry

Genetic polytopes

Genetic algorithms, which solve optimisation problems in a natural selection-inspired way, reveal previously unconstructed Calabi-Yau manifolds.

Algebraic geometry

Analysing amoebae

Genetic symbolic regression methods reveal the relationship between amoebae from tropical geometry and the Mahler measure from number theory.

Group theory

On John McKay

This obituary celebrates the life and work of John Keith Stuart McKay, highlighting the mathematical miracles for which he will be remembered.

AI-assisted maths

AI for arithmetic curves

AI can predict invariants of low genus arithmetic curves, including those key to the Birch-Swinnerton-Dyer conjecture—a millennium prize problem.

AI-assisted maths

Clustered cluster algebras

Cluster variables in Grassmannian cluster algebras can be classified with HPC by applying the tableaux method up to a fixed number of columns.

Number theory

Elliptical murmurations

Certain properties of the bivariate cubic equations used to prove Fermat’s last theorem exhibit flocking patterns, machine learning reveals.

String theory

World in a grain of sand

An AI algorithm of few-shot learning finds that the vast string landscape could be reduced by only seeing a tiny fraction to predict the rest.

Evolvability

Flowers of immortality

The eigenvalues of the mortality equation fall into two classes—the flower and the stem—but only the stem eigenvalues control the dynamics.

Gravity

AI classifies space-time

A neural network learns to classify different types of spacetime in general relativity according to their algebraic Petrov classification.

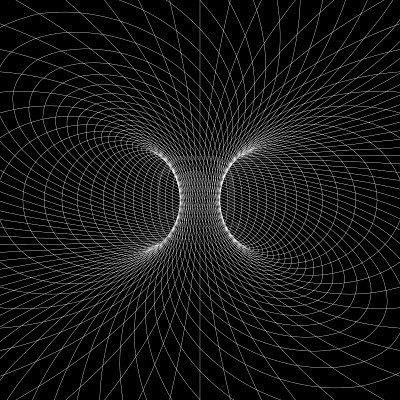

String theory

Algebra of melting crystals

Certain states in quantum field theories are described by the geometry and algebra of melting crystals via properties of partition functions.

AI-assisted maths

Machine learning Hilbert series

Neural networks find efficient ways to compute the Hilbert series, an important counting function in algebraic geometry and gauge theory.

AI-assisted maths

Line bundle connections

Neural networks find numerical solutions to Hermitian Yang-Mills equations, a difficult system of PDEs crucial to mathematics and physics.

AI-assisted maths

Calabi-Yau anomalies

Unsupervised machine-learning of the Hodge numbers of Calabi-Yau hypersurfaces detects new patterns with an unexpected linear dependence.

String theory

Mahler measure for quivers

Mahler measure from number theory is used for the first time in physics, yielding “Mahler flow” which extrapolates different phases in QFT.

AI-assisted maths

Learning the Sato–Tate conjecture

Machine-learning methods can distinguish between Sato-Tate groups, promoting a data-driven approach for problems involving Euler factors.

Machine learning

Universes as big data

Machine-learning is a powerful tool for sifting through the landscape of possible Universes that could derive from Calabi-Yau manifolds.

Number theory

Reflexions on Mahler

Using Newton polynomials from reflexive polygons, we find that the Mahler measure and dessin d’enfants are in one-to-one correspondence.

String theory

QFT and kids’ drawings

Groethendieck's “children’s drawings”, a type of bipartite graph, link number theory, geometry, and the physics of conformal field theory.

Machine learning

Neurons on amoebae

Machine-learning 2-dimensional amoeba in algebraic geometry and string theory is able to recover the complicated conditions from so-called lopsidedness.

Group theory

New approaches to the Monster

Editorial of the last set of lectures given by the founder, McKay, of Moonshine Conjectures, the proof of which got Borcherds the Fields Medal.

Combinatorics

Combinatoric topological strings

We find a physical interpretation, in terms of combinatorial topological string theory, of a classic result in finite group theory theory.